Supercomputing Is Here!

For some colleges and universities, computing is now

soaring into the stratosphere.

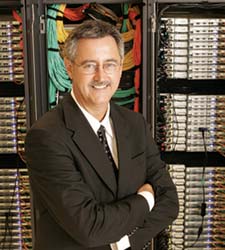

Mike Hickey at Embry-Riddle Aeronautical University

is using a brand-new supercomputer to learn

more about acoustic-gravity waves in the upper

portions of Earth’s atmosphere— waves which

ultimately impact flying conditions.

tackle computing, their time is occupied by laptops and

servers—relatively small-scale stuff. A couple of blades

here, a couple of blades there. Generally, even for network

managers, the processing power rarely stacks up to anything

awe-inspiring. Sometimes, however, computing can be bigger

and broader than many of us can imagine, requiring

more juice than some small nations use in a year. Then, of

course, we find ourselves in the 21st-century realm of highperformance

computing. High-performance computing

efforts at four schools—

Indiana University, the

University

of Florida, the

University of Utah, and

Embry-Riddle

Aeronautical University (FL)—demonstrate that the latest

and greatest in supercomputing on the academic level

far exceeds the computing power that most of us can conceive.

As computing power continues to grow, however,

these tales undoubtedly are only the beginning.

Forecasting Improvements

In the wake of the devastation caused by Hurricane Katrina,

a number of today’s most elaborate high-performance

computing endeavors revolve around finding better ways to

predict everything from everyday showers and snowstorms

to devastating hurricanes and tornad'es. At Indiana University,

researchers in the School of Informatics are using

their high-speed computing and network infrastructure to

help meteorologists make more timely and accurate forecasts

of dangerous weather conditions. The project, which

kicked off with an $11 million grant from the National Science

Foundation in 2003, is dubbed Linked

Environments for Atmospheric Discovery (LEAD).

The LEAD system runs on a series of remote, distributed

supercomputers—a method known as grid computing.

Co-principal Investigator Dennis Gannon, who also serves

as a professor of Computer Science at the university, says

the project is designed to build a “faster than real time”

system that could save lives and help governments better

prepare themselves for looming natural disasters. Today,

the project is in its infancy—as yet in the planning and testing

stages. Ultimately, however, Gannon sees the model

outpacing the current strategy for weather prediction—a

system that, despite constant improvements, still runs

largely on simulations.

“Our goal is nothing short of building an adaptive, ondemand

computer and network infrastructure that responds

to complex weather-driven events,” he says. “We hope to use

this technology to make sure storms never cripple us again.”

The LEAD system pools and analyzes data received from

other sources such as satellites, visual reports from commercial

pilots, and NEXRAD, a network of 130 national

radars that detect and process changing

weather conditions. Down the road, an

armada of newer and smaller ground

sensors dispatched to detect humidity,

wind, and lightning strikes will be part

of the network, too. As weather information

comes in, it is interpreted by special

software agents that are monitoring

the data for certain dangerous patterns.

Once these patterns are identified, the

agents will dispatch the data to a variety

of high-performance computers across

private networks for real-time processing

and evaluation.

In most cases, these collection devices

will send weather data out for processing on computers in the IU network. Sometimes,

however, spurred by an additional

$2 million grant from the National Science

Foundation (NSF),

the software agents will dispatch data to

computers on a broader distributed computing

network known as TeraGrid. The grid is a national

network that allows scientists across

the nation to share data and collaborate.

Under this system, huge computers in San

Diego, Indiana, and Pittsburgh are linked

via a 20GB connection rate, to facilitate

cooperation. The result: several thousand

processors at a participating school’s fingertips,

on demand.

“My files may come from San Diego

and my computing facility may be in

Pittsburgh, but the network is such that

the facility in Pittsburgh d'esn’t care

where the data’s from,” explains Gannon.

“As you can see, this kind of leverage

opens up a host of new doors in

terms of what kind of weather data we

can process with supercomputers, and

how we can do it.”

The University of Florida’s new cluster

boasts tightly coupled nodes that act

like a single computer; no more

disparate calculations attempting to

handle huge computations.

Clusters: Loose or Tightly Coupled?

The science of supercomputer processing

is far from easy. Generally, the

“engines” of supercomputers are gaggles

of processing power called “nodes.”

Each node is comprised of a series of

processors, and each processor differs

from the others depending on how

sophisticated the supercomputer is. By

and large, these nodes usually boast two

to four central processing units (CPUs)

with up to 4GB of RAM. To put this into

perspective, the best nodes basically are

the same as four really expensive personal

computers. And most supercomputers

have at least 100 of these

nodes—the equivalent of 400 of the

fastest and most efficient computers

money can buy.

Still, not all supercomputers are created

equal. Technologists at the High

Performance Center (HPC) at the University

of Florida recently unveiled a

brand-new cluster: a 200-node supercomputer

that’s bigger than anything the

school has had before. With the speed of

this machine, HPC Director Erik Deumens

says UF researchers will embark

on new projects to investigate the properties

of molecular dynamics, the ins

and outs of aerodynamic engineering,

and climate modeling projects of their

own. Deumens calls the bulk of these

projects “multi-scale”—an approach

that takes into account mathematical

Still, not all supercomputers are created

equal. Technologists at the High

Performance Center (HPC) at the University

of Florida recently unveiled a

brand-new cluster: a 200-node supercomputer

that’s bigger than anything the

school has had before. With the speed of

this machine, HPC Director Erik Deumens

says UF researchers will embark

on new projects to investigate the properties

of molecular dynamics, the ins

and outs of aerodynamic engineering,

and climate modeling projects of their

own. Deumens calls the bulk of these

projects “multi-scale”—an approach

that takes into account mathematical

“You try to describe a certain piece of

a problem with a particular methodology,

but you know that in some part,

something more interesting is happening,

so you use the magnifying glass of

advanced calculation,” he says, pointing

to one researcher who is studying the

molecular interaction of heated silicon

when engineers etch microchips. “The

only way to make sure you’re not overwhelmed

with numbers is to look at

problems with enough computing power

to answer multiple questions at once.”

Deumens notes that with a 200-node

supercomputer, connections between

nodes are critical. To make sure the

machine functions properly, HPC

turned to Cisco Systems for all of the networking connections

between nodes. According to Marc

Hoit, interim associate provost for Information

Technology, the vendor also is

helping UF connect all of its clusters on

campus so HPC can perform more gridbased

computations. All told, Hoit estimates

that soon, more than 3,000 CPUs

will be part of this grid. UF also will

contribute to the Open Science Grid, an international

infrastructure in the vein of TeraGrid, though considerably larger.

One key differentiator between the

expanded grid and UF’s new cluster is

the way in which the nodes are coupled.

In the UF cluster, the nodes are more

tightly coupled, meaning that they act

more like one single computer, and can

be harnessed to perform large-scale

mathematical computations quickly, as

one unit. In the grid, however, the nodes

are loosely coupled, meaning they all

have other computing responsibilities,

and likely are not ready to handle huge

calculations at one time. The “loosely

coupled” strategy is perfect for small

equations with small sets of data, such

as genetic calculations. For weather and

aerodynamic simulations, however,

Hoit notes the tightly coupled approach

is a must.

“Many people often make simple

statements about CPUs, and say they

can apply all the power to solving one

large problem,” he says. “But a tightly

coupled approach can help achieve efficiency

for large problems, too.”

WHAT IS MYRINET?

Myrinet is a networking system designed

by Myricom; it has fewer

protocols than Ethernet, and so is faster

and more efficient. Physically, Myrinet

consists of two fiber optic cables,

upstream and downstream, connected

to the host computers with a single

connector. Machines are connected via

low-overhead routers and switches, as

opposed to connecting one machine

directly to another. Myrinet includes a

number of fault-tolerance features,

mostly backed by the switches. These

include flow control, error control, and

“heartbeat” monitoring on every link.

The first generation provided 512

Megabit data rates in both directions,

and later versions supported 1.28 and

2 Gigabits. Newest “Fourth-generation

Myrinet” supports a 10 Gigabit data

rate, and is interoperable with 10

Gigabit Ethernet. These products started

shipping in September 2005.

Myrinet’s throughput is close to the

theoretical maximum of the physical

layer. On the latest 2.0 Gigabit links,

Myrinet often runs at 1.98 Gigabits of

sustained throughput—considerably

better than what Ethernet offers, which

varies from 0.6 to 1.9 Gigabits,

depending on load. However, for supercomputing,

the low latency of Myrinet

is even more important than its throughput

performance, since, according to

Amdahl’s Law, a high-performance parallel

system tends to be bottlenecked

by its slowest sequential process,

which is often the latency of transmission

of messages across the network in

all but the most embarrassingly parallel

supercomputer workloads.

And Now for the Metacluster…

Running a giant cluster like the one at

UF is remarkable, but imagine operating

something five times that big. Such

is life for Julio Facelli, director of the

Center for High-Performance Computing

(CHPC) at the University of Utah.

The Center is responsible for providing

high-end computer services to advanced

programs in computational sciences and

simulations. Recently, via a grant from

the National Institutes of Health (NIH), CHPC has purchased a

metacluster to tackle the new generation

of bioinformatics applications, which

comprise the nitty-gritty study of genetic

code and similarly complicated equations.

Facelli says the machine is one

of the largest of its kind in the academic

world.

The new metacluster boasts more

than 1,000 64-bit processors in dense

blades from Angstrom Microsystems. The metacluster

has been configured into five subsystems,

including a parallel cluster with

256 dual nodes, a “cycle farm” cluster

with 184 dual nodes, a data-mining

cluster with 48 dual nodes, a long-term

file system with 15 terabytes of storage,

and a visualization cluster driven by a

10-node cluster. Most of these clusters

are connected by Gigabit Ethernet. The

parallel cluster runs on Myrinet, a networking

system designed by Myricom that has fewer protocols

than Ethernet, and therefore is

faster and more efficient. (See box above, “What is Myrinet?”)

“When we started this program eight

or nine years ago, it was possible only to

do simulations in one dimension,” says

Facelli. “Today, we are computing in

three dimensions and running calculations

that we never dreamed of being

able to run.”

In addition to these resources, the

metacluster boasts a “condominium”-

style sub-cluster in which additional

capacity can be added for specific

research projects. With this feature,

Facelli says CHPC uses highly advanced

scheduling techniques to provide seamless

access to heterogeneous computer

resources necessary for an integrated

approach to scientific research in the

areas of fire and meteorology simulations,

spectrometry, engineering, and

more. Additional specialized servers are

available for specific applications such

as large-scale statistics, molecular modeling,

and searches in GenBank, a database

of genetic data. CHPC is developing

several cluster test beds to implement

grid computing, too.

Down the road, CHPC plans to add

two dual nodes to its “condominium”-

style cluster for a proposed study of

patient adherence to poison control

referral recommendations. As Facelli

explains it, the school seeks to use

machine learning methods for feature

selection and predictive modeling—an

enterprising approach, considering that

prior to this, no researcher or research

institution had ever implemented highperformance

computing to accomplish

such a challenge. CHPC will support

these nodes for the duration of the project

and will collaborate with the project investigators in the computational aspects

of the research. Afterward, Facelli says,

the nodes will be subsumed back into

the system.

“You can never have too many nodes

in your metacluster,” he quips. “We’re

excited about the possibilities of what

these will bring.”

WHAT IS BEOWULF?

Aside from being a classic work of

early literature, Beowulf is a type of

computing cluster. The label is a

design for high-performance parallel

computing clusters on inexpensive

personal computer hardware. Originally

developed by Donald Becker at NASA,

Beowulf systems are now deployed

worldwide, chiefly in support of

scientific computing. There is no particular

piece of software that defines a

cluster as a Beowulf. Commonly used

parallel processing libraries include

Message Passing Interface (MPI) and

Parallel Virtual Machine (PVM), a software

tool developed by the University

of Tennessee, Emory University (GA),

and The Oak Ridge National Laboratory. Both of these permit

the programmer to divide a task among

a group of networked computers, and

recollect the results of processing.

Flying High

Another school that has researchers

excited about the future is Embry-Riddle

Aeronautical University (FL). There, in

the school’s four-year-old Computational

Atmospheric Dynamics (CAD) laboratory,

Mike Hickey, associate dean of

the College of Arts and Sciences, is

using a brand-new supercomputer to

learn more about acoustic-gravity waves

in the upper portions of Earth’s atmosphere.

Driving Hickey’s research is a

131-node, 262-processor Beowulf cluster

(see box above), which runs simulations

of waves propagating through the

atmosphere. These waves ultimately

impact flying conditions, which is precisely

why the research is of such value

to a school like Embry-Riddle.

Such simulations used to take three or

four days to run; with the power of the

new machine, however, Hickey can run

them in a matter of hours. Elsewhere at

the school, other researchers are turning

to Beowulf to speed up projects of their

own. Hickey points to a number of plasma

physicists who are calling upon the

computer to simulate plasma flow and

interactions, and engineers who are running

simulations of the flow of gasses

through turbine engines. One professor

even uses the system to analyze information

from a database of all the commercial

airline flights in the US for the

last decade; from this information, the

professor is trying to predict flight

delays down the road.

“Especially at an engineering school

like ours, there’s a lot of numerically

intensive simulation work on campus,”

says Hickey, who explains that in general,

Beowulf clusters are groups of similar

computers running a Unix-like

operating system such as GNU/Linux

or BSD. “The best way around [the

demand for so much simultaneous simulation

work on one campus] was to try

and get a computer that serves everybody’s

needs.”

Still, the emergence of Embry-Riddle’s

supercomputer has not been without

hiccups. The first challenge revolved

around program code: Researchers can’t

just take the code that runs on a single

processor, move it over to the new

machine, and run it; instead, code for

the supercomputer needs to be heavily

modified in order to take advantage of

the multiple processing capabilities. Consequently,

last summer, Embry-Riddle

ran a workshop to educate some professors

and graduate students about how to

manipulate data for the new machine.

Another subject of the workshop: Message

Passing Interface (MPI), an architecture

that must be learned by a user in

order to understand when the high-performance

computer is ready to receive

new material.

The University of Utah has purchased a metacluster to tackle a new generation of bioinformatics applications; the

machine is one of the largest of its kind

anywhere in the academic world.

Embry-Riddle officials also have

tackled challenges of a more logistical

nature. CIO Cindy Bixler says that

when Hickey came to her and requested

the supercomputer, the school’s facilities

department didn’t understand that

investing in a machine of that magnitude

would require the school to rethink

its server room completely. Computer

clusters run hot, so the school had to

invest in additional air-conditioning

units. Bixler then brought in an engineer

to study the server room’s air flow and

figure out where to put the new cooling

device. Finally, of course, was the issue

of electricity—with the new machine,

Embry-Riddle’s energy bills went

through the roof.

“You can’t just flip the switch on a

high-performance computer and expect

everything else to work itself out,”

Bixler says. “This kind of effort takes

considerable planning, and in order to

avoid surprises, schools need to be

ready before they buy in.”