The Newest Media and a Principled Approach for Integrating Technology Into Instruction

- By Joel Smith, Susan Ambrose

- 06/03/04

When and how should new media be incorporated into instruction? Two leaders

in instructional technology and cognitive science from Carnegie Mellon University

offer concrete suggestions from their experience, illustrated by applications

of new media by the Open Learning Initiative at CMU.

“New media in instruction?” Déjà vu. Didn’t

we write that article more than a decade ago? Wasn’t it about HyperCard

and JPEGs and Internet resources for teaching and learning? Isn’t that

“old media” yet? Some of the “New Media Centers” originally

sponsored by Apple Computer are still here, but now they are populated with

“iLife.” The “new” in new media is ever-changing. There

will always be novel opportunities and technologies, but there will also be

not-so-novel questions about the integration of new media in education. As Clark

and Mayer (2003) remind us, “What we have learned from all the media comparison

research is that it’s not the medium, but rather the instructional methods

that cause learning.” This is a reflection on the enduring question of

the relationship between the “newest media” and instructional methods.

Even the older “new media” are still changing education. The advent

of course management systems has meant a much broader community of faculty now

using images, graphics, sound, video, and computer simulations as instructional

materials. On top of that, newer technologies and their applications spring

up constantly. What are the “new media” of 2004? Some candidates

are: virtual worlds, gaming environments, blogs, wikis, intelligent agents,

iPods, MP3 files and players, institutional repositories, and so forth. Technologies

and adopters change, but the questions endure. Can these information technologies,

in fact, add value to learning? Given the evolution of new media, how can educators

determine what to use, when, and why? Hence, our guiding question is, how should

educators assess the effectiveness of new media using performance-based measures—not

relying only on the often-used survey of student satisfaction? This is, we believe,

the question that has largely eluded a comprehensive answer throughout the recent

history of “new media.”

Informing Instructional Design

At Carnegie Mellon, the Eberly Center for Teaching Excellence and the Office

of Technology for Education have forged a close relationship to consult with

faculty colleagues on effective teaching approaches based in learning theory,

including the integration of technology into course design and classroom pedagogy.

One strategy we employ applies equally to any new approach in teaching, whether

it employs “new media” or not. That strategy is to apply some of

the best current knowledge from cognitive and learning sciences to assess proposed

teaching innovation.

We ask our colleagues to think in a systematic way about any new pedagogical

strategy, including the use of media. Couched in terms of use of “new

media,” some of the fundamental questions we pose include:

- What is the educational need, problem, or gap for which use of new media

might potentially enhance learning?

- Would the application of new media assess students’ prior knowledge

and either provide the instructor with relevant information about students’

knowledge and skill level or provide help to students in acquiring the necessary

prerequiste knowledge and skills if their prior knowledge is weak? (Clement

1982, Minstrell 2000)

- Would the use of new media enhance students’ organization of information

given that organization determines retrieval and flexible use? (DiSessa 1982,

Holyoak 1984)

- Would the use of new media actively engage students in purposeful practice

that promotes deeper learning so that students focus on underlying principles,

theories, models, and processes, and not the superficial features of problems?

(Craik and Lockhart 1972, NRC 1991, Ericsson 1990)

- Would the application of new media provide frequent, timely, and constructive

feedback, given that learning requires accurate information on one’s

misconceptions, misunderstandings, and weaknesses? (Black and William 1998,

Thorndike 1931)

- Would the application of new media help learners develop the proficiency

they need to acquire the skills of selective monitoring, evaluating, and adjusting

their learning strategies (some call these “metacognitive skills”),

because these skills enhance learning and, without them, students will not

continue to learn once they leave college? (Matlin 1989, Nelson 1992)

- Would the use of new media adjust to students’ individual differences

given that students are increasingly diverse in their educational backgrounds

and preferred methods of learning? (NRC 2000, Galotti 1999)

Each of these questions carries as its underlying presupposition a result

from cognitive science. Collectively, we might call them “cognitive

desiderata” for new teaching strategies. We have provided in associated

endnotes reference to the research that justifies that presupposition for

some of the questions. To give an example, question 2 is based on the principle

that prior knowledge as the basis for building new knowledge can facilitate,

interfere with, or distort the integration of incoming information. In other

words, prior knowledge is the lens through which we view all new knowledge,

so understanding [and then addressing] students’ misperceptions when

they enter a course will aid learning. This principle is justified by many

researchers, including the work of J.J. Clement and J. Minstrell, which is

referenced in the footnote on that question above.

However “technocool” or visually attractive or absorbing a piece

or collection of new media is, unless its instructional application plausibly

justifies an answer of “yes” to the questions above, prima facie

it is unlikely to affect educational outcomes. In contrast, if a proposed use

warrants an answer of “yes” to one or more of the questions above,

it stands a chance of making a difference. Of course, the ultimate test of whether

any application of new media is instructionally significant is determined by

empirical evaluation of its impact, an area that has too long been ignored in

higher education in general.

New Media in Practice

Carnegie Mellon is currently undertaking a major project to develop Web-based

courses and course materials that make use of some kinds of new media and are

based on what we know about learning from the cognitive and learning sciences.

This project is known as the Open Learning Initiative (OLI) [http://www.cmu.edu/oli].

Faculty colleagues have brought to this project applications of new media that

plausibly meet the “cognitive desiderata.”

StatTutor

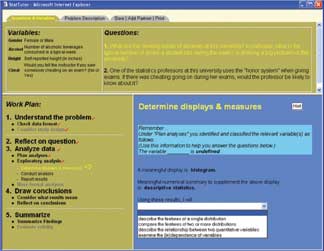

Consider one piece of the OLI, the StatTutor developed by Dr. Marsha Lovett,

a cognitive psychologist, in collaboration with the Statistics Department

at Carnegie Mellon. This is a Web-based tool for providing statistics students

a scaffolded environment for learning how to represent, structure, and solve

problems in introductory statistics. The creation of this instructional environment

was a response to the concern of statistics educators that students often

leave courses without the desired statistical reasoning skills and transfer

ability, rendering their learning limited in use (Lovett, 2001). This establishes

“need” per our first question. StatTutor also has strong affirmative

answers to questions 3, 4, 5, and 6. The StatTutor environment appears in

Figure 1 (page 23).

Figure 1: StarTutor

In the left-hand panel, the StatTutor provides the student with a “Work

Plan,” a consistent way for organizing analysis of the problem. The

students click on each step as they address the problem. This approach provides

“purposeful practice” that focuses on the problem-solving process

inherent in statistical reasoning (question 4). This “scaffolding”

is removed as students move through the course so that they internalize this

way of organizing an approach to problem-solving (question 3).

Students are challenged to respond to a large number of questions about the

problems in the right-hand panel as they step through the process. This interactive

feature is tied to a version of a “cognitive tutor” (Anderson

et al., 1995), which provides them with both feedback on incorrect answers

and hints (note the Hint button) when they are stuck. Therefore StatTutor

satisfies the criterion of providing “frequent, timely, and constructive

feedback” (question 5).

Taken together, the deeper learning of processes involved in statistical

reasoning and the feedback provides students with metacognitive skills (question

6) that will be applicable in multiple contexts for multiple problems.

Virtual Lab

Another course under development for the Open Learning Initiative is in introductory

chemistry. One fully developed tool for this course is Virtual Lab, the work

of Dr. David Yaron, appearing in Figure 2.

Figure 1: Virtual

Lab

Professor Yaron has created this powerful open-ended virtual lab environment

(certainly something qualifying as “new media”), not as a replacement

for wet labs (although it might serve that purpose for populations that don’t

have any access to wet labs). Rather, his goal is to change the nature of

how students think about solving problems in chemistry.

To the first question of our desiderata—“What is the educational

need, problem, or gap for which use of new media might potentially enhance

learning?”—he answers: “Typically, students solve homework

problems in chemistry by thumbing back through the chapter to find the appropriate

equations into which they can “plug in” the numbers given in the

problem, and this is a poor way to learn how chemists solve problems.”

By using the Virtual Lab, he can change the nature of the problems presented

to students. Rather than problems of the form: “An N molar solution

of X and an M molar solution of Y are mixed together. What is the pH of the

resulting buffer solution?” the problems can be formulated as “Go

to the virtual lab and create a buffer solution with a desired pH.”

We believe this type of activity “promotes deeper learning,” thus

yielding an affirmative answer to question 4 and, when the questions are structured

properly, lets different students solve problems in different ways (question

7).

Virtual Lab activities were the primary mode of practice, with course concepts

and material for three out of the four instructional units (thermodynamics,

equilibrium, and acid-based chemistry) this past semester at Carnegie Mellon.

The Virtual Lab activities engaged students in new modes of interaction, such

as experimental design and comparison of different chemical models, and used

realistic contexts, such as acid mine draining and design of a chemical solution

that causes a protein to adopt a specific configuration. Student performance

on end-of-unit exams containing traditional assessment items was equivalent

to or better in than past years when the virtual lab was not used. While feedback

is not currently part of this experience, our questions point us toward the

need for it and the Open Learning Initiative is adding mini cognitive tutors

to the Virtual Lab so that students can get timely feedback.

Enduring Goals

We recognize that satisfying some or even all of the cognitive desiderata d'esn’t

guarantee that an application of new media will succeed. Obviously recent research

in human-computer interaction provides vital information to those creating eLearning

environments.

The remaining piece, however, in the application of any new media is careful

evaluation of actual impact. The Open Learning Initiative, following the long-standing

practices of the Eberly Center at Carnegie Mellon and the Learning Research

and Development Center at the University of Pittsburgh (an evaluation partner

in the OLI), engages in various kinds of evaluation of the impact of new media

in its courses.

Some techniques are based on a unique feature of digital learning

environments: their capacity to record every choice a student takes in problem-solving.

OLI courses and media tools are being instrumented to record student performance,

both in learning environments like StatTutor and the Virtual Lab and in associated

online assessments. The result is a test bed for experimenting with different

kinds of educational uses of new media. This type of evaluation, while time-consuming

and expensive, holds, we believe, great potential. Other assessment methods

used to evaluate OLI uses of new media are more traditional: pre-test, post-test

comparisons, think-aloud protocols, and the like.

New media, in their many forms, do offer education the opportunity to deal

both with intractable teaching and learning problems and the economic challenges

facing post-secondary education. But the usefulness of each new wave must confront

enduring questions. Some of those questions are captured in our “cognitive

desiderata” listed above. Others are represented by long-standing best

practices in evaluation of instruction. Whatever our personal attraction to

virtual labs or immersive digital worlds or multiplayer games, we must keep

our eye on the goal—improving learning. The enduring questions provide

us with a powerful framework within which to deploy new media in ways that will

make a difference in education. That is the thread that should run through the

constant change in media that digital technology will bring. The technology

changes, but our goals and evaluative standards as educators endure.

References

Anderson, J. R., Corbett, A. T., K'edinger, K., & Pelletier, R. (1995).

“Cognitive Tutors: Lessons Learned.” The Journal of Learning

Sciences, 4, 167-207.

Black, P., & William, D. (1998). "Assessment and Classroom Learning."

Assessment in Education, 5(1), 7-73.

Clark, R., and Mayer, R.E., (2003), e-Learning and the Science of Instruction.

San Francisco, CA: Pfeiffer.

Clement, J.J. (1982). “Students’ Preconceptions in Introductory

Mechanics.” American Journal of Physics, 50, 66-71.

Craik, F. I. M., & Lockhart, R. S. (1972). “Levels of Processing:

A Framework for Memory Research.” Journal of Verbal Learning and

Verbal Behavior, 11, 671-684.

DiSessa, A. (1982). “Unlearning Aristotelian Physics: A Study of Knowledge-Based

Learning.” Cognitive Science, 6, 37-75.

Ericsson, K.A. (1990). “Theoretical Issues in the Study of Exceptional

Performance.”

In K.J. Gilhooly, M.T.G. Keane, R.H. Logie, and G. Erdos (Eds.). Lines

of Thinking: Reflections on the Psychology of Thought. New York: John

Wiley & Sons.

Galotti, K. (1999). Cognitive Psychology In and Out of the Laboratory

(2nd ed.). Belmont, CA: Brooks/Cole Publishing Co.

Holyoak, K. J. (1984). “Analogical Thinking and Human Intelligence.”

In R. J. Sternberg (Ed.), Advances in the Psychology of Human Intelligence,

Vol. 2, pp. 199-230. Hillsdale, NJ: Erlbaum.

Lovett, M. (2001). “A Collaborative Convergence on Studying Reasoning

Processes: A Case Study in Statistics.” In S. M. Carver and

D. Klahr (Eds.), pp. 347-384, Cognition and Instruction: Twenty-Five Years

of Progress. Mahwah, New Jersey: Lawrence Erlbaum Associates.

Matlin, M. W. (1989). Cognition. NY, NY: Harcourt, Brace, Jovanovich.

Minstrell, J. (2000). “Student Thinking and Related Assessment: Creating

a Facet-Based Learning Environment.” In Committee on the Evaluation

of National and State Assessments of Educational Progress. N.S. Raju, J.W.

Pellegrino, M.W. Bertenthal, K.J. Mitchell and L.R. Jones (Eds.), Grading

the Nation’s Report Card: Research from the Evaluation of NAEP

(pp. 44-73). Commission on Behavioral and Social Sciences and Education. Washington,

DC: National Academy Press.

National Research Council (1991). In D. Druckman and R.A. Bjork (Eds.), In

the Mind’s Eye: Enhancing Human Performance. Washington, D.C.:

National Academy Press.

National Research Council (2000). How People Learn: Brain, Mind, Experience,

and School. Washington, DC: National Academy Press.

Nelson, T. A. (1992). Metacognition. Boston, MA: Allyn & Bacon.

Thorndike, E.L. (1931). Human Learning. New York: Century.