Supercomputers at UT Austin Make Black Hole Journey Possible

- By Dian Schaffhauser

- 04/23/19

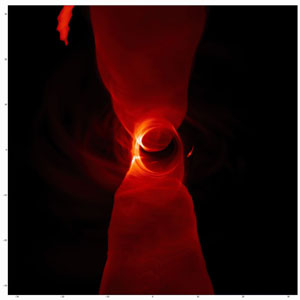

Still from a simulation of hot gas falling into the black hole at the center of the Milky Way (Source: Lia Medeiros, Chi-Kwan Chan, Feryal Özel, Dimitrios Psaltis)

Supercomputing resources at the Texas Advanced Computing Center, hosted at the University of Texas at Austin, played a role in the processing of data related to the recent headline-worthy release of the first image of a black hole. The picture, which found its way around the world on April 10, showed a bright ring of light bent around the gravity generated from a black hole that researchers said was 6.5 billion times more massive than our solar system's sun, sitting 53 million light years away in the center of the galaxy Messier 87. And three TACC supercomputers — Stampede1, Stampede2 and Jetstream — helped make the journey possible.

The image was, of course, the product of data generated during 2017 from the Event Horizon Telescope (EHT), a collection of eight high-altitude radio telescopes scattered around the world that form a collective Earth-sized observatory, which can capture distant radio waves with greater clarity than anything else previously developed. Results were published in a series of papers appearing in Astrophysical Journal Letters on the same day the image was released.

"For decades, we have studied how black holes swallow material and power the hearts of galaxies," said Harvard University professor and EHT researcher Ramesh Narayan, who used TACC resources in support of the project, according to an article about the project. "To finally see a black hole in action, bending its nearby light into a bright ring, is a breathtaking confirmation that supermassive black holes exist and match the appearance expected from our simulations."

The Stampede clusters were used to model the physical attributes of M87 and predict observational features of the black hole. Those results were later used in comparisons with the actual EHT observational values to validate various models, according to the article, "including properties of accretion disks — the matter swirling around the edge of the black hole — and jets — energy spinning out of a black hole and shooting through space."

Researchers from the Event Horizon Telescope collaboration used Stampede2 to perform relativistic simulations of M87. These were compared with data from the radio telescopes to help confirm the final image. (Source: Texas Advanced Computing Center)

A group of researchers from the University of Arizona used Jetstream — a large-scale cloud environment located both at TACC and Indiana University — to set up data analysis pipelines to be used in combining the vast amounts of data taken from the geographically-distributed observatories and sharing it with other researchers around the world. (Jetstream was recognized last year by Campus Technology with an Impact Award for its use in extending scientific and research computing resources to more higher education communities.)

"New technologies such as cloud computing are essential to support international collaborations like this," said Chi-kwan Chan, leader of the EHT Computations and Software Working Group and an assistant astronomer at the University of Arizona. "The production run was actually carried out on Google Cloud, but much of the early development was on Jetstream. Without Jetstream, it is unclear that we would have a cloud-based pipeline at all."

The work is expected to continue for many years. "These groundbreaking results show how discovery and imagination are fueled by advanced computing systems combined with the talented researchers and developers that use them," said Niall Gaffney, TACC's director of Data Intensive Computing. "This is just the beginning of our understanding of the extreme gravitational environments of black holes. We at TACC will continue to provide the world-class support and services that lead to these and other fundamental discoveries about the nature of the universe in which we live."

About the Author

Dian Schaffhauser is a former senior contributing editor for 1105 Media's education publications THE Journal, Campus Technology and Spaces4Learning.