Cloud Security Alliance Report Explores Potential of AI for 'Offensive Security'

A new paper examines how advanced AI can help perform adversarial testing with red/black teams and provides recommendations for organizations to do just that.

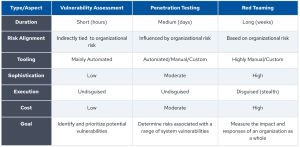

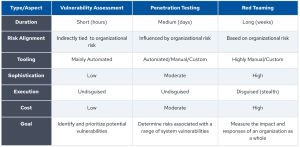

Published on Aug. 6 by Cloud Security Alliance (CSA), the "Using AI for Offensive Security" paper examines AI's integration into three offensive cybersecurity approaches:

- Vulnerability assessment: can be used for the automated identification of weaknesses using scanners.

- Penetration testing: can be used to simulate cyberattacks in order to identify and exploit vulnerabilities.

- Red teaming: can be used to simulate a complex, multi-stage attack by a determined adversary, often to test an organization's detection and response capabilities.

Related practices are shown in this graphic:

[Click on image for larger view.] Offensive Security Testing Practices (source: CSA).

[Click on image for larger view.] Offensive Security Testing Practices (source: CSA).

CSA notes actual practices can differ based on various factors such as organizational maturity and risk tolerance.

A primary focus of the paper is the shift in cybersecurity caused by advanced AI such as large language models (LLMs) that power generative AI.

"This shift redefines AI from a narrow use case to a versatile and powerful general-purpose technology," said the paper, which details current security challenges and showcases AI's capabilities across five security phases:

- Reconnaissance - Reconnaissance represents the initial phase in any offensive security strategy, aiming to gather extensive data regarding the target's systems, networks, and organizational structure.

- Scanning - Scanning entails systematically examining identified systems to uncover critical details such as live hosts, open ports, running services, and the technologies employed, e.g., through fingerprinting to identify vulnerabilities.

- Vulnerability Analysis - Vulnerability analysis further identifies and prioritizes potential security weaknesses within systems, software, network configurations, and applications.

- Exploitation - Exploitation involves actively exploiting identified vulnerabilities to gain unauthorized access or escalate privileges within a system.

- Reporting - The reporting phase concludes the offensive security engagement by systematically compiling all findings into a detailed report.

"By adopting these AI use cases, security teams and their organizations can significantly enhance their defensive capabilities and secure a competitive edge in cybersecurity," the paper said.

The paper examines current challenges and limitations of offensive security, such as expanding attack surfaces, advanced threats and so on, and delves deeply into LLMs and advanced AI in the form of autonomous agents.

"An agent begins by breaking down the user request into actionable and prioritized plans (Planning). It then reasons with available information to choose appropriate tools or next steps (Reasoning). The LLM cannot execute tools, but attached systems execute the tool correspondingly (Execution) and collect the tool outputs. Then, the LLM interprets the tool output (Analysis) to decide on the next steps used to update the plan. This iterative process enables the agent to continue working cyclically until the user's request is resolved," the paper states. That's illustrated with this graphic:

[Click on image for larger view.] AI Agent Phases (source: CSA).

[Click on image for larger view.] AI Agent Phases (source: CSA).

Other topics include:

As far as what organizations can do to capitalize on advanced AI for offensive security, CSA provides these recommendations:

- AI Integration: Incorporate AI to automate tasks and augment human capabilities. Leverage AI for data analysis, tool orchestration, generating actionable insights and building autonomous systems where applicable. Adopt AI technologies in offensive security to stay ahead of evolving threats.

- Human Oversight: LLM-powered technologies are unpredictable, can hallucinate, and cause errors. Maintain human oversight to validate AI outputs, improve quality, and ensure technical advantage.

- Governance, Risk, and Compliance (GRC): Implement robust GRC frameworks and controls to ensure safe, secure, and ethical AI use.

"Offensive security must evolve with AI capabilities," CSA said in conclusion. "By adopting AI, training teams on its potential and risks, and fostering a culture of continuous improvement, organizations can significantly enhance their defensive capabilities and secure a competitive edge in cybersecurity."

The full report is available on the CSA site here (registration required).

About the Author

David Ramel is an editor and writer at Converge 360.