Purdue App Puts Learning Data into Students' Hands

Engineering students at Purdue University can track their study behaviors with Pattern, a quantified-self tool designed to help learners regulate and improve their habits.

Category: Student Systems & Services

Institution: Purdue University

Project: Pattern: Quantified Self-Assessment for Students

Project lead: Beth Holloway, assistant dean of undergraduate education, director of women in engineering

Tech lineup: Developed in-house

Learning analytics tools have become increasingly valuable for college and university administrators looking to boost student success. But can data also inform decision-making on the part of students themselves? A project at Purdue University (IN) explores that possibility by taking advantage of the "quantified self" movement (made popular by health-tracking apps such as Fitbit) and putting the data into students' hands.

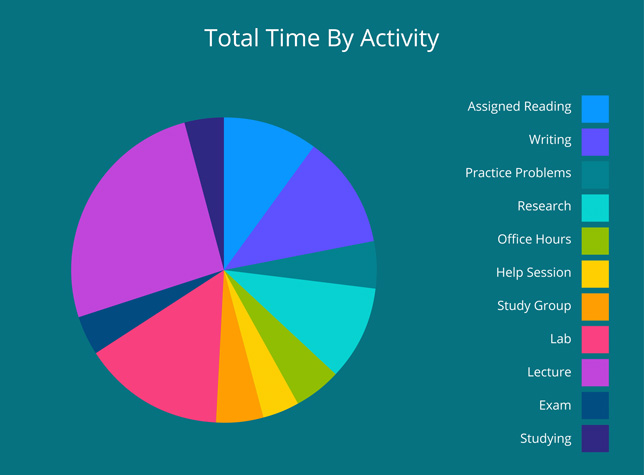

Pattern, one of several teaching and learning apps developed by Purdue Teaching and Learning Technologies over the past few years, allows students to self-track their academic and extracurricular pursuits and rate how productive they are. The app also lets them compare their behaviors to other students to see which activities may yield the best results. Pattern can suggest when to study, recommend ways students can be more efficient with their time, and suggest how long students should be spending on tasks.

Project lead Beth Holloway

Beth Holloway, assistant dean of undergraduate education in the College of Engineering and director of women in engineering, set out to apply Pattern to a course engineering students find particularly difficult. Her project piloted the use of Pattern in a single section of a mechanical engineering course on thermodynamics during the Fall 2016 semester with 60 students. At Purdue, the course has a DFW (drop, fail, withdraw) rate of 25 to 40 percent, depending on the semester. Many students struggle in this course and then end up changing majors due to low grade point average. The mechanical engineering department had taken steps to try to improve success in the course, including opening a tutorial center and offering supplemental instruction. "Those things have been in place for several semesters and have had some impact, but not as much as we would have liked to see," Holloway said.

"I wondered if we gave students a very specific way to track their time and were able to mirror that back to them and have them be able to compare vs. others in their class, perhaps that would help them calibrate to this course and the expectations of what they should be doing," she added.

Holloway offered extra credit to students who used Pattern in the course. Students logged their study habits for a total of four weeks during the semester (three exams and a final exam). She and educational technologist Brandon Karcher began compiling the student data and combining it with test scores in the course. "I flashed up a slide in class that said, students who earned A's vs. B's vs. C's studied this many hours," she said. "We didn't do statistical significance on pilot data, but you could definitely see there is a connection between hours studied and grades earned. Our hope was that reflecting that back to the class would provide guidance and help change some behaviors."

Because those initial results were promising, other engineering instructors participated in an expanded study in seven courses, with nearly 300 students involved. The data from those courses is now being evaluated to assess whether use of the app is having an impact in these difficult engineering courses.

In designing how the app would be used, Karcher said he and Holloway had to think about how they needed to tailor Pattern to their purposes. "Pattern has a default set of things you can log that are more generic," he said. "In this case it was more exam-specific. Instead of logging about reading a book or doing homework or going to class, this was much more focused. We had to narrow down the specific activities for exams."

Pattern asks students to self-report time spent reading course materials, working on problems, attending help sessions, going to office hours and more.

Karcher noted that the widespread use of quantified-self apps might help students grasp the value of using an app like Pattern. "When we talk to students about tracking this type of data, it is still a new concept applying it in education, but they do value being able to visualize what they are doing," he said. "A fun and interesting thing about this project is that we are introducing the idea of quantified self in an area that they hadn't thought about before."

Because Pattern's usage was so novel, Holloway and Karcher felt the extrinsic reward of extra credit was needed to get students to use it. "Long term, we would want there to be enough perceived value that they are intrinsically motivated to use it," Karcher said. "That is one of the things we are moving toward. The tool is still under development."

Pattern has been licensed to other universities (such as the University of Wisconsin-Madison), so another goal is to collect data on a cross-institutional basis. Karcher said his team would like to partner with another large university to look at engineering students across institutions and see if they get the same or different results.

In addition, said Holloway, the project could help the engineering department understand student help-seeking behaviors — tracking when they go to office hours, a tutorial center or help session. It could also help instructors design curriculum to match patterns of success, as well as a method to provide formative feedback.

"This use of Pattern could also allow an instructor to say on the first day of class, 'students who are successful in this class do x, y and z' — and it is not just anecdotal," she said. "There is data to back it up."

But she stressed that another novel aspect of this project is that learning analytics are usually designed for administrators and faculty members to use. The goal here was to feed data right back to students. "I think sometimes we don't always think through the fact that the learning environment has two groups in it — instructors and students. They both need to be committed. If we are only feeding data back to administrators and instructors, then we are missing out on an opportunity to have students be agents of their own success."

Return to Campus Technology Impact Awards Home